Accuracy plateau at 65–75%

Даже frontier модели показывают только 65–75% точности на комплексных многодоменных задачах. Это означает, что каждый третий ответ требует ручной проверки или полной переработки.

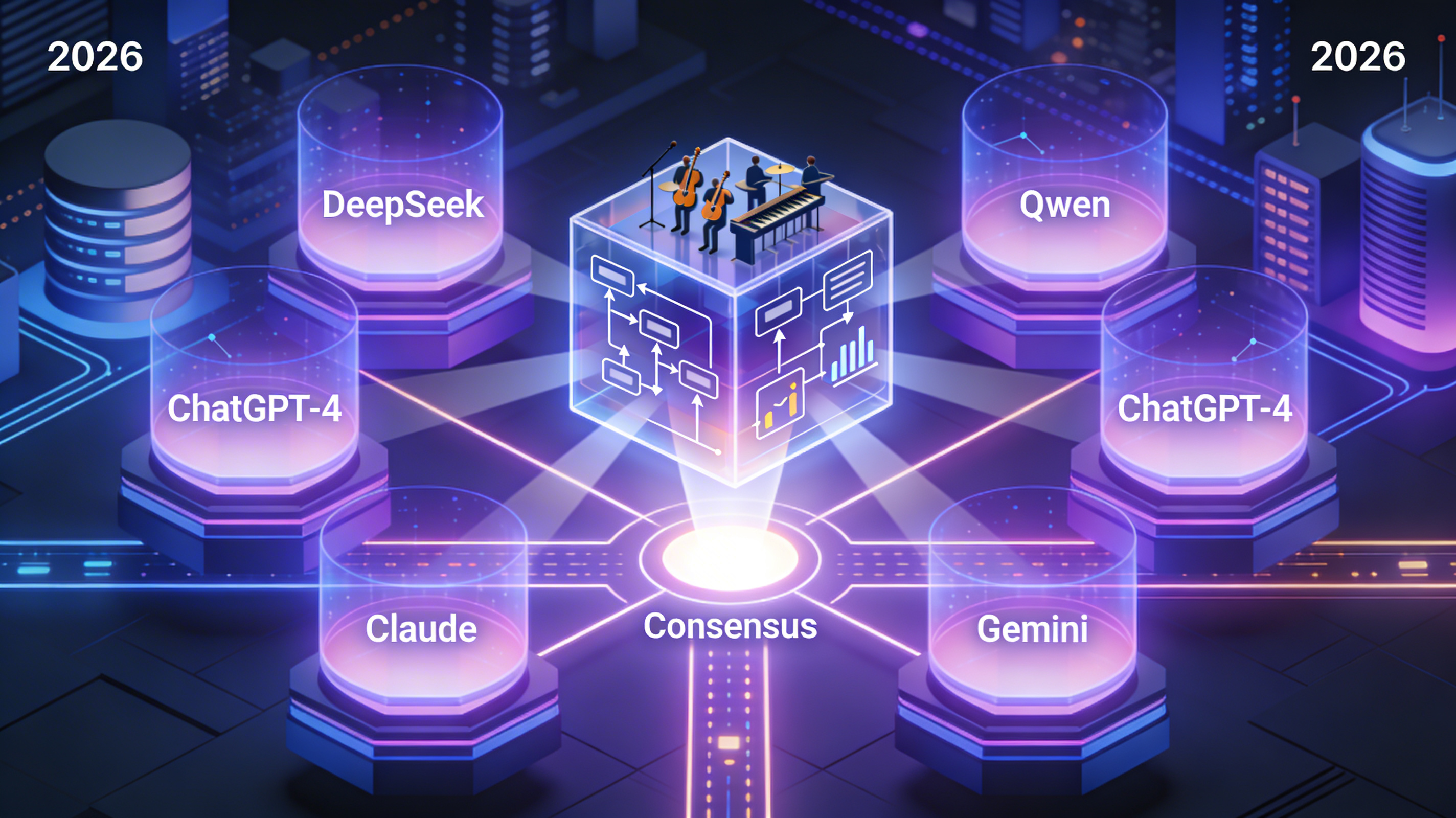

CAIS — это революционный мета‑оркестратор параллельных LLM, который запускает 5+ независимых моделей одновременно, синтезирует их ответы через четырёхуровневый консенсус, детектирует циклические ошибки в real‑time и превращает проверенные решения в переиспользуемые модули в векторной базе знаний.

Why CAIS represents a paradigm shift in AI system architecture

Современные одиночные LLM достигли фундаментального потолка: только 65–75% точности на комплексных задачах, 12–18% запросов впадают в бесконечные циклы, сжигая сотни токенов впустую, и нет механизма обучения — каждая задача решается заново с нуля, что делает их непригодными для mission‑critical приложений без дорогостоящей человеческой верификации.

CAIS запускает 5+ специализированных LLM параллельно через OpenRouter (300+ моделей), синтезирует единое превосходящее решение через четырёхуровневый консенсус, детектирует циклы в режиме реального времени (F1‑score 0.72) и превращает решения в модули, создавая самообучающуюся систему с экспоненциальным снижением стоимости.

Why single‑LLM architectures fail at scale: fundamental limitations that can't be patched

Даже frontier модели показывают только 65–75% точности на комплексных многодоменных задачах. Это означает, что каждый третий ответ требует ручной проверки или полной переработки.

12–18% сложных запросов приводят к бесконечным циклам: модель повторяет одну и ту же ошибку на протяжении 500–2000 токенов, сжигая бюджет без приближения к решению.

Модели не сохраняют знания между сессиями. Компания, решившая 1000 похожих задач, на 1001‑й тратит столько же, сколько на первой — никакого эффекта масштаба.

Анализ → генерация → проверка → рефайнинг — всё последовательно. Реальная задача растягивается на 40–60 секунд, даже до человеческой проверки.

"We had to hire a 3-person QA team just to check GPT‑4 outputs. 70% accuracy sounds high until you realize it's 30,000 failed answers per month that could've gone into production."

"Our LLM pipeline sometimes gets stuck repeating the same hallucination. We've burned $40K in wasted tokens before we built manual circuit breakers. There has to be a better way."

CAIS: A meta‑orchestrator that treats LLMs as parallel agents and fuses their reasoning into a synthetic consensus

Вместо того чтобы заставлять одну модель итерироваться 5–8 раз, CAIS запускает задачу на 5+ специализированных LLM одновременно и ждёт первой волны когерентных ответов для схождения.

CAIS не голосует за "лучший" ответ. Он синтезирует новое решение, комбинируя сильнейшие фрагменты рассуждений каждой модели и разрешая конфликты через объективные проверки (тесты, факт‑чекинг, вычисления).

Гибридный алгоритм (TF‑IDF + BERT embeddings + статистика паттернов) мониторит поток токенов в real‑time, прерывает зацикленные модели на 50–100 токене, экономя 50–100× затрат на ошибочных итерациях.

Каждое решение с confidence > 0.85 автоматически декомпозируется на универсальный модуль с JSON schema, реализацией, тестами и метаданными — и добавляется в векторную базу знаний для переиспользования.

Six-layer architecture: from task intake to continuous learning through modularization

OpenRouter as the switching fabric: 300+ models, unified API, automatic fallback, transparent pricing

OpenRouter acts as a unified gateway to the entire LLM ecosystem, enabling CAIS to be model‑agnostic, cost‑optimized, and resilient by design.

OpenAI‑compatible interface for 300+ models. Switching from GPT‑4o to Claude or DeepSeek is just changing the model name — no code rewrite.

If a provider is down or rate‑limited, OpenRouter automatically reroutes to alternative models without CAIS code changes.

Real‑time access to per‑model pricing allows CAIS to build cost‑aware selection strategies, balancing quality vs budget.

Enterprises can bring their own keys (OpenAI, Anthropic) while still using OpenRouter's routing and observability.

CAIS dynamically composes the LLM panel based on multiple factors:

curl https://openrouter.ai/api/v1/chat/completions \

-H "Authorization: Bearer $OPENROUTER_API_KEY" \

-H "HTTP-Referer: https://cais.app" \

-H "X-Title: CAIS v3.1 Meta Orchestrator" \

-H "Content-Type: application/json" \

-d '{

"model": "anthropic/claude-3.5-sonnet",

"messages": [

{

"role": "system",

"content": "You are a specialized code reviewer for Python systems."

},

{

"role": "user",

"content": "Review this async function for race conditions..."

}

],

"temperature": 0.2,

"max_tokens": 2000,

"route": "fallback",

"models": [

"anthropic/claude-3.5-sonnet",

"openai/gpt-4o",

"deepseek/deepseek-v3"

]

}'CAIS делает аналогичный вызов для каждой модели в панели параллельно, собирает ответы, и синтезирует консенсус. OpenRouter обеспечивает автоматический fallback, если primary модель недоступна.

Real‑time hybrid algorithm that saves 50–100× token waste by detecting and terminating infinite loops

When faced with a difficult problem, LLMs sometimes enter silent infinite loops: they rephrase the same flawed reasoning pattern over hundreds of tokens without making progress. This is invisible to users until timeout or manual interruption, burning $0.015–$0.06 per failed attempt.

0.4×TF‑IDF + 0.4×BERT + 0.2×pattern.

If final score > 0.88 → terminate stream, save wasted tokens, trigger fallback.

Exponential cost reduction through continuous learning: from $0.004 to $0.0002 per request over 24 months

B2B‑first SaaS with enterprise licensing, skill marketplace, and exceptional unit economics (LTV:CAC 13.5:1)

Four-phase journey from PoC to enterprise‑ready platform over 27 months: $3.36M total investment

Михаил Дейнекин — Lead Engineer & AI Architect

"Одиночные LLM достигли теоретического потолка точности. Следующий прорыв — не в увеличении параметров, а в создании коллективного интеллекта через параллельные консенсусные системы. CAIS — это не просто инструмент, это новая парадигма AI-инфраструктуры для mission-critical задач."

Open to discussions with potential co-founders, advisors, and early-stage investors passionate about next-gen AI infrastructure.

CAIS is not incremental improvement — it's a fundamental rethinking of how AI systems should work. We're building the meta-orchestrator that will power the next generation of mission-critical AI applications.